Last week I attended a two-day event on Open Research Data: Implications and Society. The event was located at Warsaw’s University Library, close to the old district, and it took place while all the students were actually studying on the library.

The event was sponsored by the Research Data Alliance and OpenAire among others, with presenters from institutions like CERN, companies that aim at facilitating publishing scientific data like Figshare (or benefit from them like Altmetric) and people from the editorial world like Elsevier and Thomson Reuters. Lidia Stępińska-Ustasiak was the main organizer of the event, and she did a fantastic job. My special thanks to her and her team.

In general, the audience was very friendly and willing to learn more about the problems exposed by the presenters. The program was packed with keynotes and presentations, which made it quite a non-stop conference.

What I presented

I attended the event to talk about Research Objects and our approach for their proper preservation by using checklists. Check the slides here. In general, our proposal was well received, even though much work is still necessary to make it happen as a whole. Applications like RODL or MyExperiment are the first step forward towards achieving reproducible publications.

What I liked

The environment, the talks (kept on 10 minutes for the short talks and on 25 for keynotes), people staying to hear others and not running away after their presentations, and all the discussions that happened during and after the events.

What I missed

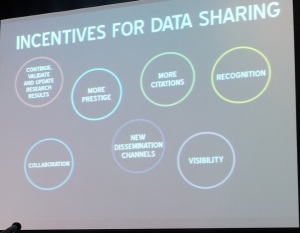

Even though I enjoyed the event very much, I missed some innovative incentives for scientist to actually share their methods and their data. Credit and attribution were the main reasons given by everyone to share their data. However, these are long term benefits. For instance, after sharing the data and methods I have used in several papers as Research Objects, I have noticed that it really takes a lot of time to document everything properly. It pays off on the long term when you (or others) want to reuse your own data, but not immediately. Thus, I can imagine that other scientists may use this as an excuse to avoid publishing their data and workflow when they publish the associated paper. The paper is the documentation, right?

My question is: can we provide a benefit for sharing data/workflows that is immediate? For example: if you publish the workflow, the “Methods” page of your paper will be written automatically, or you will have an interactive drawing that looks supercool on your paper, etc. I haven’t found an answer to this question yet, but I hope to see some advance in this direction in the future.

But enough with my own thoughts, let’s stick to the content. I summarize both days below.

Day 1

After the welcome message, Marek Niezgódk introduced the efforts made in Poland towards research open data. The polish Digital Library now offers access to all scientific publications for everyone, in order to foster polish scholar bibliography in the scientific world. Since polish is not an easy language, they are investing in the development of tools and projects like Wordnet and Europeana.

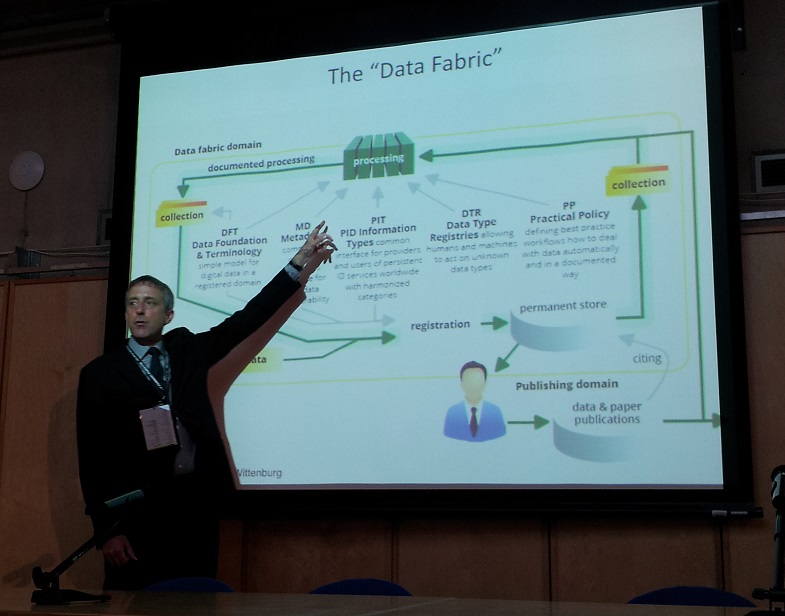

Mark Parsons (Research Data Alliance) followed by describing the problem of replication of scientific results. Before working in RDA, he used to work on the NSDIC, which observes and measures climate change. Apparently, some results were really hard to replicate because different experts understood concepts differently. For example, the term “ice edge” is defined differently in several communities. Open data is not enough: we need to build bridges among different communities of experts, and this is precisely the mission of RDA. With more than 30 working and interest groups integrating people from industry and academia, RDA aims to improve the “data fabric” by building foundational terminologies, enabling discovery among different registries and standardizing methodologies between different communities:

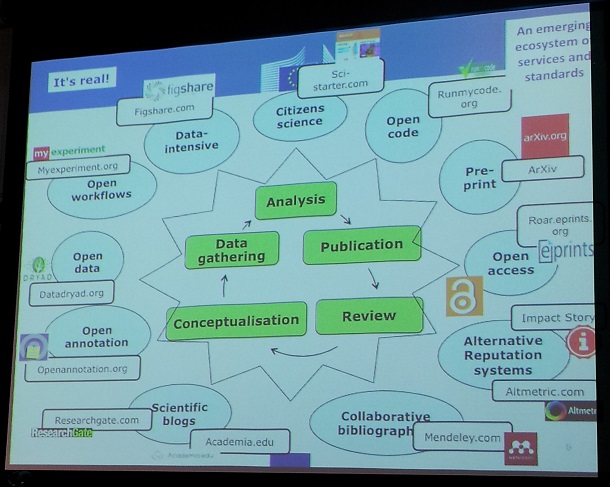

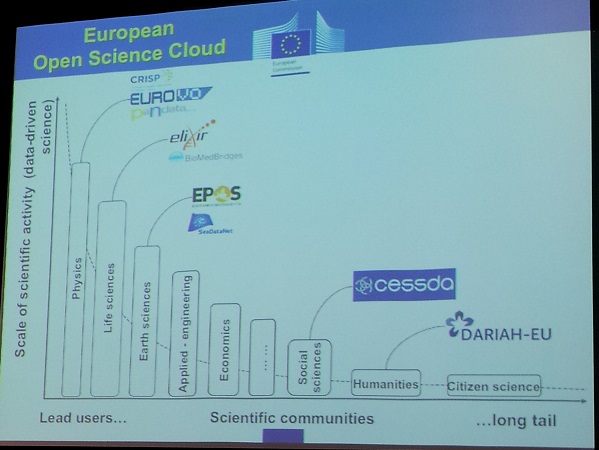

Jean-Claude Burgelman (European Commission) provided a great overview of the open research lifecycle:

The presenter described the current concerns with open access in the European Commission, and how they are proposing a bottom-up approach by enabling a pilot for open research data which has provided encouraging preliminary results.

Although open data is currently being opened in some areas (see picture below), it is good to see that the European Commission is also focusing on infrastructures, hosting, intellectual property rights and governance. For example, in the open pilot even patents are possible with the open data policy.

The talk ended up with an interesting thought: High impact journals are less than 1% of the scientific production.

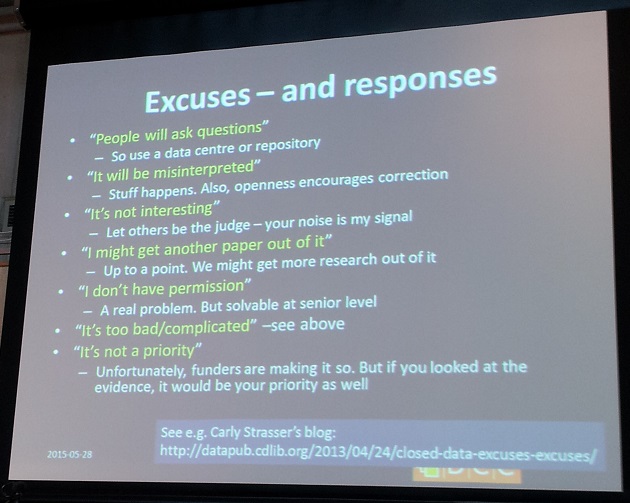

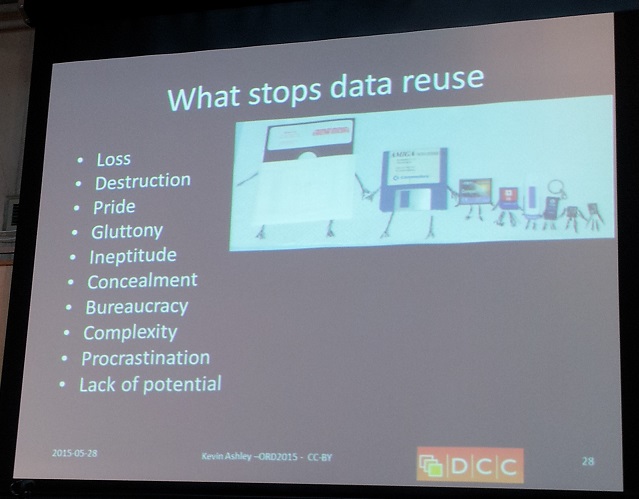

The next presenter was Kevin Ashley, from the British Digital Curation Center. Kevin started his talk with the benefits of data sharing, both from a selfish view (credit) and the community view (for example, data from archaeology has been used by paleontology experts). Good research needs good data, and what some people consider noise could be a valuable input for other researchers in different areas.

I liked how Kevin provided some numbers regarding the maintenance of an infrastructure for open access of research papers. Assuming that only 1 out of 100 papers are reused, in 5 years we could save up to 3 million per year from buying papers online. Also, linking publication and data increases its value. Open data and closed software, on the other hand, is a barrier.

The talk ended with the typical reasons people give to not to share their data, as well at the main problems that actually stop data reuse:

The evening was followed by a set of quick presentations.

- Giulia Ajmone (OECD) introduced open science policy trends by using the “stick and carrot” metaphor: carrots are financial incentives, proper acknowledgement and attribution, while the sticks are the mandatory rules necessary to make them happen. Individual policies are at the national levels on many countries.

- Magdalena Szuflita (Gdańsk University of Technology) tried to identify additional benefits for data sharing by doing a survey on economics and chemistry (areas where the researchers didn’t share their data).

- Ralf Toepfer (Leibniz centre of economics) provided more details on open research data in economics, where up 80 % of the researchers do not share their data (although the majority of the people think other people should share their data). I personally find this very shocking in an environment where trust and credibility is key, as some of these studies might be the cause of big political changes.

- Marta Teperek (University of Cambridge) talked about the training activities and workshops for sharing data at the University of Cambridge.

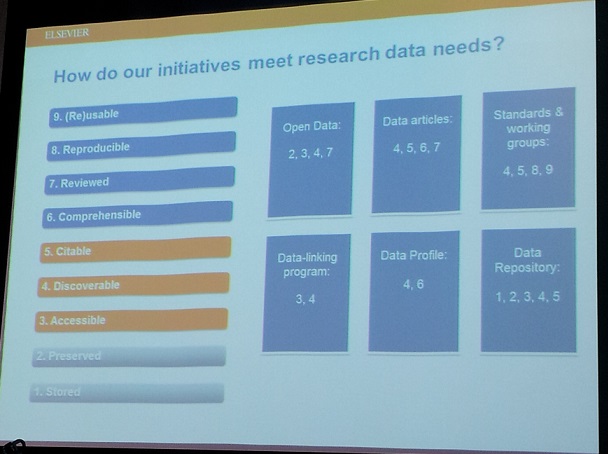

- Helena Cousijn (Elsevier) described ways for researchers to store, share and discover data. I liked the slide comparing the research initiatives versus the research needs (see below). I also learnt that Elsevier has a data repository where they assign DOIs and up to 2 data journals.

- Marcin Kapczyński introduced the data citation index they are developing at Thomspon Reuters, which covers 240 high value multidisciplinary repositories. A cool feature is that it can distinguish between datasets and papers.

- Monica Rogoza (national library of Poland) presented an approach to connect their digital library to other repositories, providing a set of tools to visualize and detect pictures in texts.

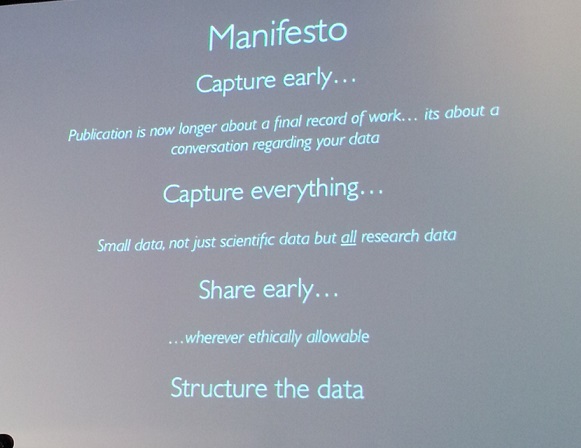

The day ended with some tools and methodologies for opening data in different domains. Daniel Hook, from FigShare, gave the invited talk by appealing to our altruism instead of our selfishness for sharing data. He surveyed the different ages of research: individual research led to the age of enlightenment, institutional research to an age of evaluation, national research to an age of collaboration and international research to an age of impact. Unfortunately, sometimes impact might be a step back from collaboration. Most of the data is still hidden in Dropbox or pendrives, and when institutions share it we find three common cases: 1) they are forced to do it, in which case the budget for accomplishing it is low; 2) they are really excited to do it, but it is not a requirement; and 3) May not understand the infrastructure, but they aim to provide tools to allow authors to collaborate internationally.

And finally, a manifesto:

The short talks can be summarized as follows:

- Marcin Wichorowsky (University of Warsaw) talked about the GAME project database to integrate oceanographic data repositories and link them to social data.

- Alexander Nowinsky (University of Warsaw) described COCOs, a cosmological simulation database which aims at storing large scale simulations of the universe (with just 2 datasets they are over 100TB!)

- Marta Hoffman (University of Warsaw) introduced RepOD, the first repository for open data in Poland complementary to other platforms like the Open Science Platform. It adapts C-KAN and focuses explicitly on research data.

- Henry Lütke (ETH Zurich) described their publication pipeline for scientific data, by using OpenBis for data management, electronic notebooks and OAI-PMH to track the metadata. Integrated with C-KAN as well.

Day 2

The second day was packed with presentations as well. Martin Hamilton (Jisc) gave the first keynote by analyzing the role of the pioneer. Assuming that in 2030 there will be tourists in Mars, what are the main causes that could enable it? Who were the pioneers that pushed this effort forward? For example, Tesla Motors will not initiate any lawsuit against someone who, in good faith, wants to use their technology for the greater good. These are the kind of examples we need to see for research data as well. New patrons may arise (e.g., Google, Amazon, etc. give awards as research grants) and there will be a spirit of co-opetition (i.e., groups with opposite interests working together on the same problem), but working together we could address the issue of open access in research data and move towards other challenges like full reproducibility of the scientific experiments.

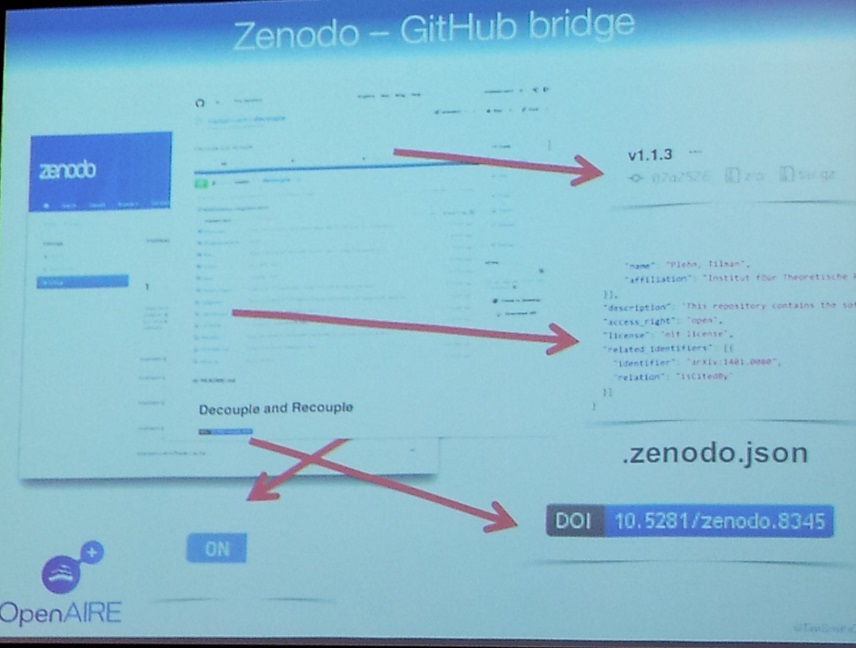

Tim Smith (CERN, Zenodo) followed by describing how we often find ourselves on the shoulders of secluded giants. We build up on the work done by other researchers, but the shareablity of data might be a burden in the process: “If you stand on the shoulders of hidden giants, you may not see too far”. Tim argued that researches participating in the human collective enterprise that pushes research forward often look for their own best interest, and that by fostering feedback one’s own interest may become a collective interest. Of course, this also involves a scientist-centric approach providing access to the tools, data, materials and infrastructure that delivered the results. Given that software is crucial for producing research, Zenodo was presented as an application for collaborative development to publish code as part of the active research process (integrated with Github). The keynote ended by explaining how data is shared in an institution like CERN, where there are PetaBytes of data stored. Since all the data can’t be opened due to its size, only a set of selected data for education and research purposes is made public (currently around 40 TB). The funny thing is how opening data has actually benefitted them: they did an open challenge asking people to improve their machine learning algorithm on the input data. Machine learning experts, who had no idea about the purpose of the data, won.

A set of short presentations were next:

- Pawel Krajewski presented the transplant project, a software infrastructure for plant scientists based on checklist for publishing the data. It follows the ISA-TAB format.

- Cinzia Daraio (Sapienza) described how to link heterogeneous data sources in an interoperable setting with their ontology-based (14 modules!) data management system. The ontology is used to represent indicators on different disciplines and be able to do comparisons (e.g., opportunistic behavior).

- Kimil Wais (University of Information Technology and Management in Rzeszów) showed how to monitor open data automatically by using an application, Odgar, based on R for visualizing and computing statistics.

- Me: I presented our approach for preserving Research Objects by using checklists described above.

After the break, Mark Thorley (NERC-UK) gave the last invited talk. He presented Cotadata.org, an international group like RDA that instead of following a bottom-up approach, follows a top-down one. As described before, a huge problem relies on the knowledge translators, who are people that know how to talk to experts in different domains for their uses of data. In this regard, the role of the knowledge broker/intermediary is gaining relevance: people that know the data and know how to use it for other people’s needs. Rather than exposing the data, in Codata they are working towards exposing and exploiting (IP rights) the knowledge behind.

A series of short talks followed the invited talk:

- Ben McLeish (Altmetric) described how in their company they look for any research output using text mining: Reddit, Youtube, repositories, blogs, etc. They have come up with a new relevance metric based on donut-shaped graphics which can even show how your institution is doing and how engaging your work is.

- Krzysztof Siewicz (University of Warsaw) explained from the legal point of view how different data policies could interfere when opening data.

- Magdalena Rutkowska-Sowa (University of Białystok) finished up by describing the models for commercialization of R&D findings. With Horizon 2020, new policy models and requirements will have to be introduced.

The second day finished with a panel discussion with Tim Smith, Giulia Ajmone, Martin Hamilton, Mark Parsons and Mark Thorley as participants, discussing further some of the issues presented during both days. Although I didn’t take many notes, some of the discussion were about how enterprises could figure out open data models, data privacy, how to build services on top of open data or the value of making data available.